Anthropic CEO responds to Trump order, Pentagon clash

About the Author

Section titled “About the Author”Jo Ling Kent is a Senior Business and Technology Correspondent at CBS News, with over 15 years of experience reporting at the intersection of technology and business. Her coverage spans topics such as the impact of artificial intelligence, privacy on social media, global supply chains, and the rise of China's economy. She has won an Edward R. Murrow Award and received three Emmy nominations.

About the Guest

Section titled “About the Guest”Dario Amodei, born in San Francisco in 1983, is the co-founder and CEO of Anthropic. He previously served as Vice President of Research at OpenAI, where he led the development of GPT-2 and GPT-3 and co-invented the reinforcement learning from human feedback (RLHF) method. In 2021, he co-founded Anthropic with his sister Daniela, focusing on AI safety research. As of February 2026, the company is valued at approximately $380 billion.

Opposing the government is the most American thing in the world. We are all patriots in this, and what we are defending are the values of this country.

I. Overview

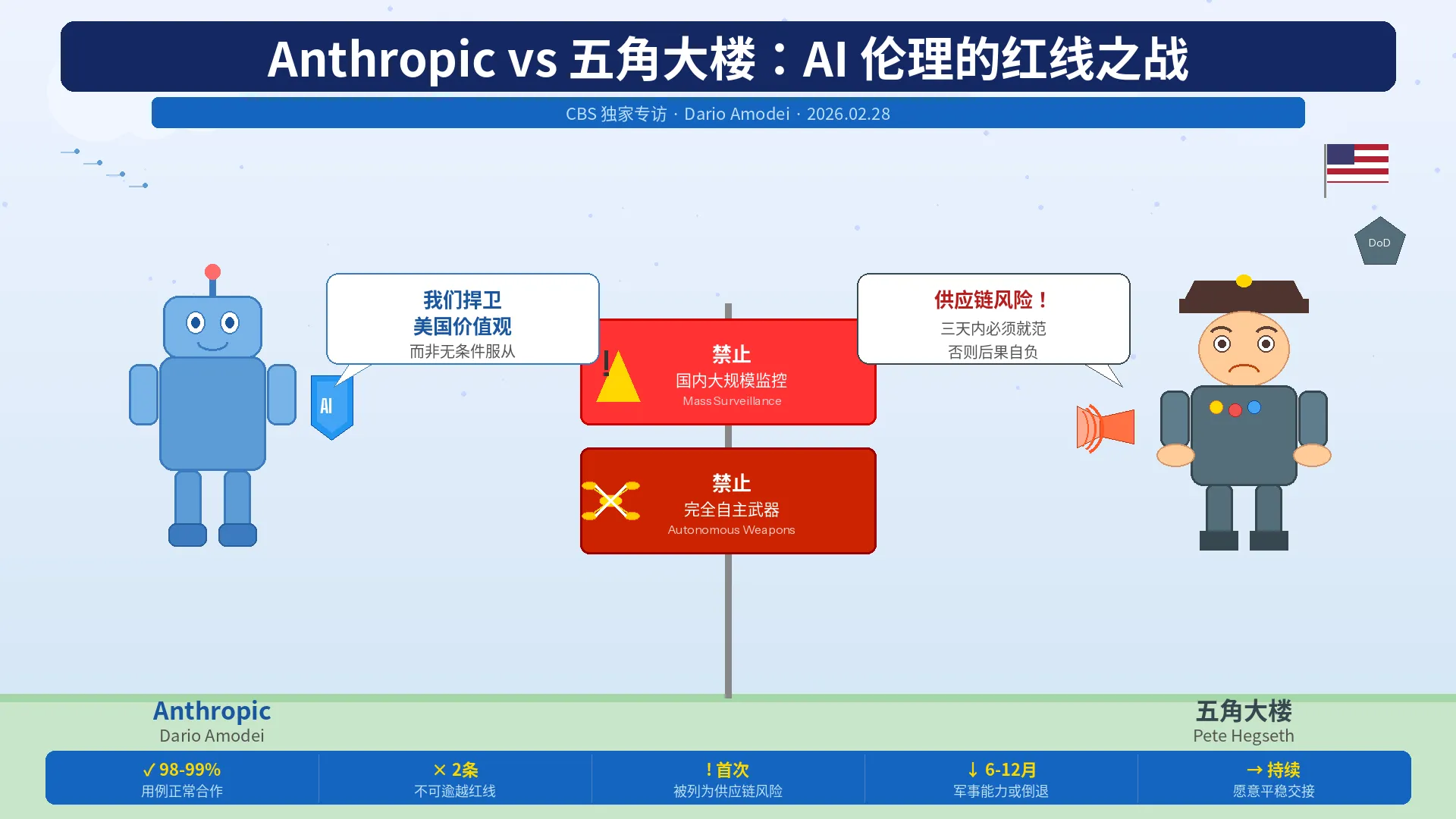

Section titled “I. Overview”This exclusive interview took place just hours after the Pentagon designated Anthropic as a "supply chain risk." Amodei holds firm to two red lines: prohibiting AI from being used for domestic mass surveillance and for fully autonomous weapons. He characterizes the government's action as "retaliatory and punitive," noting that this supply chain designation has never before been applied to a U.S.-based company. He emphasizes that Anthropic is the AI company most actively cooperating with the military and is willing to continue serving national security within its red lines. He also states they will pursue legal action upon receiving official documentation. He believes his stance is fundamentally about defending America's democratic values, not opposing the government.

II. Details

Section titled “II. Details”1. Why Anthropic Refuses to Grant the Government Unrestricted AI Access

Section titled “1. Why Anthropic Refuses to Grant the Government Unrestricted AI Access”Anthropic claims to be the most proactive AI company in collaborating with the U.S. government—it was the first to deploy its model in classified cloud environments, the first to build custom models for national security, and its technology is already widely used by the intelligence community and military. Amodei clarifies that the company agrees with approximately 98% to 99% of the Defense Department's proposed use cases. It only draws red lines for two types of uses: domestic mass surveillance—where AI makes data analysis feasible that was previously legal but impractical, causing technology to outpace current legal frameworks; and fully autonomous weapons—where current AI exhibits fundamental unpredictability, lacks reliability for independent decision-making, and there is no accountability structure for large-scale unmanned combat systems.

2. Why a Deal with the Pentagon Could Not Be Reached

Section titled “2. Why a Deal with the Pentagon Could Not Be Reached”Negotiations occurred within a tight three-day deadline window. According to Amodei, the draft agreement presented by the Pentagon to Anthropic ostensibly accepted their conditions but appended flexible clauses like "as the Pentagon deems appropriate," offering no substantial concessions. The Pentagon spokesperson's public statements consistently reiterated, "We only permit uses that are entirely legal," without effectively addressing Anthropic's specific exceptions. Amodei stresses that the entire negotiation timeline was dictated by the Defense Department, not Anthropic.

3. Response to Trump's Accusation that Anthropic "Endangers National Security"

Section titled “3. Response to Trump's Accusation that Anthropic "Endangers National Security"”Amodei states that even in the face of extreme government measures, Anthropic has committed to ensuring service continuity to help the Defense Department transition smoothly to other providers, avoiding disruptions to military operations. He notes that military personnel on the ground have told him losing Anthropic's technical support would set back relevant capabilities by 6 to 12 months or more, which is why they fought so hard for an agreement.

4. Reaction to Hegseth Listing Anthropic as a "Supply Chain Risk"

Section titled “4. Reaction to Hegseth Listing Anthropic as a "Supply Chain Risk"”Amodei calls this move "retaliatory and punitive." He points out that this supply chain designation has historically never been used against a U.S. company, previously applying only to foreign entities like Russian cybersecurity firm Kaspersky and Chinese chip suppliers. He also notes a legal error in Hegseth's announcement—the designation does not prohibit all companies with military contracts from working with Anthropic; it only restricts their use of Anthropic's services within those military contracts. Their commercial business collaborations with Anthropic remain entirely unaffected. The practical impact is far less severe than the announcement suggested.

5. Why Americans Should Trust Anthropic's CEO to Set AI Red Lines

Section titled “5. Why Americans Should Trust Anthropic's CEO to Set AI Red Lines”Amodei offers a two-part answer: First, as a private company, Anthropic has the right to decide the terms under which it provides services. The government is free to choose other providers—rather than resorting to unprecedented coercive measures. Second, the rapid evolution of AI technology has outstripped the scope of current legislation and judicial interpretation. As the entity closest to the technological frontier, Anthropic temporarily bears the responsibility of holding certain boundaries, but long-term solutions must come from Congressional legislation. He acknowledges that disputes between private companies and the Pentagon are not an ideal long-term mechanism.

6. Why It's Necessary to "Draw a Line"

Section titled “6. Why It's Necessary to "Draw a Line"”Responding to the question of why AI companies should be different from aircraft manufacturers like Boeing, Amodei explains that aviation technology has a century of accumulated knowledge, allowing military decision-makers to clearly understand its operations. In contrast, AI's computational power doubles every four months—an unprecedented rate of evolution that legislation cannot keep pace with. He argues that until Congress catches up, maintaining temporary red lines is necessary. He adds that Anthropic was willing to research autonomous weapons prototypes with the Defense Department in a sandbox environment, but the Pentagon rejected this proposal.

7. The "Worst-Case Scenario" Americans Need to Know

Section titled “7. The "Worst-Case Scenario" Americans Need to Know”Two types of risks associated with autonomous weapons: First, reliability risk—current AI might misidentify targets, causing civilian casualties or fratricide, a fundamental technical problem not yet solved. Second, accountability structure risk—if 10 million drones are controlled by a small group, the common sense judgments and responsibility mechanisms within the human chain of command would completely collapse, creating a dangerous concentration of power.

8. Response to Trump Calling Anthropic a "Woke Leftist Company"

Section titled “8. Response to Trump Calling Anthropic a "Woke Leftist Company"”Amodei denies the company has any political affiliation, listing several instances of cooperation with the Trump administration: traveling to Pennsylvania with the President for an energy supply event; publicly agreeing with most content in the AI Action Plan upon its release; participating in policy initiatives for AI in healthcare. He states Anthropic remains politically neutral, only speaking out on AI policy, and cannot control external political labels.

9. Possibility of Reaching a Future Agreement with the Government

Section titled “9. Possibility of Reaching a Future Agreement with the Government”Amodei reiterates that the two red lines are non-negotiable but states that Anthropic remains open to an agreement if both sides can find common ground on principle. He emphasizes that an agreement requires effort from both parties, and Anthropic, out of consideration for national security, is always willing to cooperate.

10. Anthropic's Business Outlook and Legal Action

Section titled “10. Anthropic's Business Outlook and Legal Action”Amodei believes the practical impact of the supply chain designation is limited, as the law only restricts collaboration within military contracts, leaving non-defense business unaffected. He criticizes Hegseth's announcement for being misleading, intended to create panic and uncertainty. He states that as of the interview, Anthropic had not received any official government documents, but upon receiving formal notification, they will mount a legal challenge.

III. Questions

Section titled “III. Questions”- How are the technical boundaries precisely defined for Anthropic's two red lines (mass surveillance, fully autonomous weapons)? Will these thresholds adjust dynamically as AI reliability continues to improve?

- Amodei calls on Congress to fill the gap between AI and the law but acknowledges Congressional action is slow—until legislation arrives, who bears the legitimacy of acting as the "temporary gatekeeper"?

- Competitor OpenAI accepted the Pentagon's "all legally permissible uses" clause around the same time. Does Anthropic's withdrawal effectively cede the decision-making power over unconstrained AI military applications to others?

- The government designated Anthropic as a supply chain risk while reportedly continuing to use Claude in active military operations (e.g., against Iran). What structural dilemma does this contradiction reveal between military dependence on AI and regulatory gamesmanship?

- Where should the boundary lie between private AI companies, acting as self-proclaimed "guardians of values" in setting usage red lines, and the legislative authority of a democratic government?

Original: Full interview: Anthropic CEO responds to Trump order, Pentagon clash